MLP GMM Agreement

From SpeechWiki

Contents |

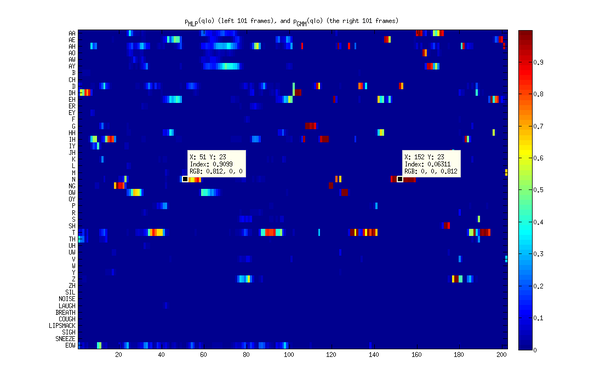

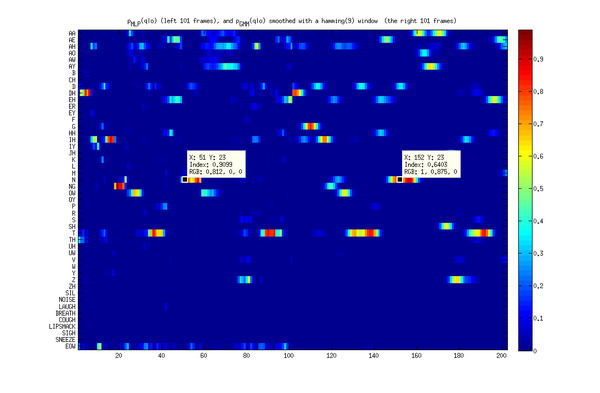

Smoothed GMMs vs MLPs and GMM accuracy

To compute phone given the observation p(q|o) we can use either MLP activations or GMMs. If both classifiers were correct their outputs would equal to each other and to the target. In reality they agree only somewhat. The GMMs tend to have sharp differences between neighboring frames, while MLPs tend to be smoother. By eyeballing p(q|o) where the GMMs and MLPs differ, it appears that the benefits of frame-replacement on segments determined from <math>p_{mlp}(q|o)</math> disappears. For example, a typical correction is /D/ -> /N/ (/N/ is the correct label). And here is what p(q|o) looks like:

Filtering the GMM p(q|o) with a hamming window improves accuracy of the GMMs, and agreement with MLPs.

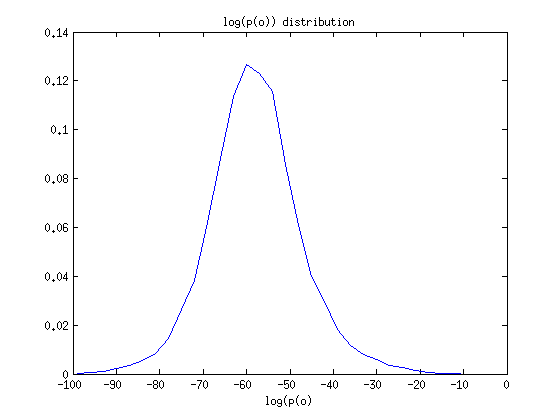

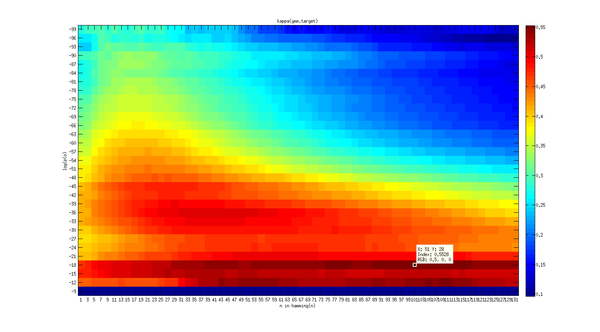

Cohen's kappa is used to measure agreement between p(q|o) generated by GMMs and MLPs as well as between the true target phonetic label for each frame. We can also look only at the frames that have sufficient support in the model: i.e. those frames with log(p(o))>T, where T is some threshold. Presumably the GMM should be more accurate on those frames. p(o) has a surprisingly normal distribution, perhaps because it's a sum of 48 somewhat independent p(o,q) observations.

The following statistics were gathered on the first 233 utterances of the training set that was already timeshrunk with tau=.9 (31402 frames).

all frames frames o such that log(p_{GMM}(o)>-33

n in hamming(n) kappa(mlp,gmm) kappa(gmm,target) kappa(mlp,gmm) kappa(gmm,target)

1 0.3939 0.3511 0.4878 0.3570

3 0.3986 0.3546 0.4950 0.3639

5 0.4203 0.3701 0.5226 0.3859

7 0.4334 0.3803 0.5258 0.3935

9 0.4428 0.3861 0.5363 0.4079

11 0.4500 0.3920 0.5427 0.4147

13 0.4550 0.3962 0.5509 0.4270

15 0.4584 0.3982 0.5600 0.4445

17 0.4599 0.3979 0.5531 0.4496

19 0.4593 0.3966 0.5696 0.4609

21 0.4579 0.3962 0.5637 0.4596

23 0.4553 0.3933 0.5651 0.4572

When using the unsmoothed MLP, kappa(mlp,target) = 0.2921. In this case we are using all frames, since p(o) cannot be computed from the MLP activations. The accuracy rate (|mlp == target|/|all frames|) for the mlp on the same data 0.3250, so kappa is a bit more conservative in declaring agreement.

This accuracy is lower that the accuracy of .5707 computed on all of the non-timeshrunk training data reported in MLP Activation Features, partly because 6% of the most accurate frames were time-shrunk, and partly because the first 233 utterances where probably tougher speech than the rest of the training data.

Smoothing MLP p(q|o)

filtering the p(q|o) with a hamming window also helps, but not enough to beat the p(q|o) from GMMs:

n in hamming(n) kappa(target,mlp) 1 0.2921 3 0.2945 5 0.3019 7 0.3089 9 0.3164 11 0.3232 13 0.3305 15 0.3374 17 0.3438 19 0.3485 21 0.3526 23 0.3548 25 0.3569 27 0.3564 29 0.3557 31 0.3543 33 0.3518 35 0.3482

Choosing the smoothing filter order

Frames with higher likelihood p(o) tend to do better with longer smoothing filters. High likelihood frames also tend to belong to vowel-like phonemes. In any case the hamming window should have a length between 13 and 47.

References

<references/>