Fisher Language Model

From SpeechWiki

m |

|||

| Line 1: | Line 1: | ||

<bibimport /> | <bibimport /> | ||

| - | The design choices behind the various language models built on the Fisher Corpus are described below. The implementation uses the [http://www.speech.sri.com/projects/srilm/ SRILM toolkit] and some of the scripts are taken from this [http://www.inference.phy.cam.ac.uk/kv227/lm_giga/ gigaword LM recipie]. The main script is [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/scr/LM/go_all.sh go_all.sh], and supporting scripts are [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/scr/LM/ here]. | + | The design choices behind the various language models built on the Fisher Corpus are described below. |

| + | |||

| + | =The scripts, data and generated language Models= | ||

| + | The implementation uses the [http://www.speech.sri.com/projects/srilm/ SRILM toolkit] and some of the scripts are taken from this [http://www.inference.phy.cam.ac.uk/kv227/lm_giga/ gigaword LM recipie]. The main script is [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/scr/LM/go_all.sh go_all.sh], and supporting scripts are [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/scr/LM/ here]. | ||

| + | |||

| + | The data and models are here [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/LM/ here]. | ||

=Source Text= | =Source Text= | ||

Revision as of 16:41, 29 September 2008

<bibimport />

The design choices behind the various language models built on the Fisher Corpus are described below.

Contents |

The scripts, data and generated language Models

The implementation uses the SRILM toolkit and some of the scripts are taken from this gigaword LM recipie. The main script is go_all.sh, and supporting scripts are here.

The data and models are here here.

Source Text

The language model is built using only the repaired training data transcriptions, where each unique partial word is treated as a separate word.

N-gram order

2-gram, 3-gram and 4-gram models are built. 5-grams and more do not yield significant improvements and are not worth the extra computational resources they require in an ASR system, and essentially nothing is gained beyond 5-grams (.06 bits lower entropy, going from 4-grams to 5-grams<bib id='Goodman2001abit' />) .

Vocab Sizes

The LMs generated use only N most frequent words, mapping the rest to the UNK token. Where N is:

| N | Comparable vocab sizes |

|---|---|

| 500 | Svitchboard vocab size used by JHU06 workshop |

| 1000 | |

| 5000 | |

| 10000 | Similar to Switchboard vocab size used by JANUS speech recognition group (9800) |

| 20k | WSJ/NAB vocab size used in the 1995 ARPA continuous speech evaluation |

| 70957 | All repaired words in the training data. |

Which of these sizes will actually be used in the recognizer still remains to be seen.

Some experiments

The model quality is reported as the cross-entropy per word: The expected value with respect to the tests data of the log probablity estimated from the training data, divided by the number of tokens in the test corpus. See Perplexity for more details. The percentage of tokens in the test data that does not occur in the vocabulary (out of vocabulary %) is also reported.

The experiments consider:

- the n-gram order (2, 3, or 4)

- the vocab size (500, 1k, 5k, 10k, 20k, all)

- choice of smoothing (modified Knesser-Ney or Good-Turing)

- using entropy pruning or not

| n-gram order | vocab | total ngrams | smoothing | pruning | dev entropy | devOOV % | test entropy | test OOV % |

| 2-gram | 500 | 127889 | GT | no-pr | 5.802 | 15.461 | 5.858 | 15.093 |

| 2-gram | 500 | 60296 | GT | pr | 5.845 | 15.461 | 5.903 | 15.093 |

| 2-gram | 500 | 127889 | KN | no-pr | 5.801 | 15.461 | 5.857 | 15.093 |

| 2-gram | 500 | 70752 | KN | pr | 6.142 | 15.461 | 6.204 | 15.093 |

| 2-gram | 1k | 266359 | GT | no-pr | 6.247 | 10.013 | 6.288 | 9.806 |

| 2-gram | 1k | 100363 | GT | pr | 6.29 | 10.013 | 6.332 | 9.806 |

| 2-gram | 1k | 266359 | KN | no-pr | 6.243 | 10.013 | 6.284 | 9.806 |

| 2-gram | 1k | 121297 | KN | pr | 6.452 | 10.013 | 6.503 | 9.806 |

| 2-gram | 5k | 781554 | GT | no-pr | 6.922 | 3.117 | 6.956 | 2.917 |

| 2-gram | 5k | 196611 | GT | pr | 6.96 | 3.117 | 6.992 | 2.917 |

| 2-gram | 5k | 781554 | KN | no-pr | 6.908 | 3.117 | 6.943 | 2.917 |

| 2-gram | 5k | 265487 | KN | pr | 6.942 | 3.117 | 6.976 | 2.917 |

| 2-gram | 10k | 1013070 | GT | no-pr | 7.098 | 1.663 | 7.121 | 1.526 |

| 2-gram | 10k | 224037 | GT | pr | 7.146 | 1.663 | 7.168 | 1.526 |

| 2-gram | 10k | 1013070 | KN | no-pr | 7.079 | 1.663 | 7.105 | 1.526 |

| 2-gram | 10k | 327131 | KN | pr | 7.125 | 1.663 | 7.149 | 1.526 |

| 2-gram | 20k | 1196182 | GT | no-pr | 7.214 | 0.81 | 7.228 | 0.748 |

| 2-gram | 20k | 243371 | GT | pr | 7.269 | 0.81 | 7.281 | 0.748 |

| 2-gram | 20k | 1196182 | KN | no-pr | 7.193 | 0.81 | 7.209 | 0.748 |

| 2-gram | 20k | 387048 | KN | pr | 7.247 | 0.81 | 7.261 | 0.748 |

| 2-gram | all | 1413153 | GT | no-pr | 7.331 | 0.271 | 7.338 | 0.254 |

| 2-gram | all | 292708 | GT | pr | 7.389 | 0.271 | 7.392 | 0.254 |

| 2-gram | all | 1413153 | KN | no-pr | 7.315 | 0.271 | 7.323 | 0.254 |

| 2-gram | all | 503370 | KN | pr | 7.371 | 0.271 | 7.377 | 0.254 |

| 3-gram | 500 | 919391 | GT | no-pr | 5.463 | 15.461 | 5.516 | 15.093 |

| 3-gram | 500 | 201453 | GT | pr | 5.496 | 15.461 | 5.548 | 15.093 |

| 3-gram | 500 | 1844779 | KN | no-pr | 5.453 | 15.461 | 5.507 | 15.093 |

| 3-gram | 500 | 456598 | KN | pr | 5.567 | 15.461 | 5.62 | 15.093 |

| 3-gram | 1k | 1309423 | GT | no-pr | 5.878 | 10.013 | 5.915 | 9.806 |

| 3-gram | 1k | 267219 | GT | pr | 5.926 | 10.013 | 5.962 | 9.806 |

| 3-gram | 1k | 2902901 | KN | no-pr | 5.86 | 10.013 | 5.899 | 9.806 |

| 3-gram | 1k | 544497 | KN | pr | 5.947 | 10.013 | 5.989 | 9.806 |

| 3-gram | 5k | 2098252 | GT | no-pr | 6.543 | 3.117 | 6.573 | 2.917 |

| 3-gram | 5k | 375374 | GT | pr | 6.618 | 3.117 | 6.645 | 2.917 |

| 3-gram | 5k | 5251482 | KN | no-pr | 6.511 | 3.117 | 6.544 | 2.917 |

| 3-gram | 5k | 673910 | KN | pr | 6.632 | 3.117 | 6.67 | 2.917 |

| 3-gram | 10k | 2342585 | GT | no-pr | 6.722 | 1.663 | 6.741 | 1.526 |

| 3-gram | 10k | 400424 | GT | pr | 6.809 | 1.663 | 6.824 | 1.526 |

| 3-gram | 10k | 5934715 | KN | no-pr | 6.686 | 1.663 | 6.709 | 1.526 |

| 3-gram | 10k | 716844 | KN | pr | 6.819 | 1.663 | 6.846 | 1.526 |

| 3-gram | 20k | 2516904 | GT | no-pr | 6.842 | 0.81 | 6.851 | 0.748 |

| 3-gram | 20k | 417045 | GT | pr | 6.935 | 0.81 | 6.94 | 0.748 |

| 3-gram | 20k | 6366532 | KN | no-pr | 6.803 | 0.81 | 6.816 | 0.748 |

| 3-gram | 20k | 760104 | KN | pr | 6.944 | 0.81 | 6.961 | 0.748 |

| 3-gram | all | 2713750 | GT | no-pr | 6.962 | 0.271 | 6.963 | 0.254 |

| 3-gram | all | 465498 | GT | pr | 7.057 | 0.271 | 7.053 | 0.254 |

| 3-gram | all | 6737974 | KN | no-pr | 6.928 | 0.271 | 6.933 | 0.254 |

| 3-gram | all | 858818 | KN | pr | 7.068 | 0.271 | 7.077 | 0.254 |

| 4-gram | 500 | 2456011 | GT | no-pr | 5.401 | 15.461 | 5.455 | 15.093 |

| 4-gram | 500 | 264641 | GT | pr | 5.433 | 15.461 | 5.486 | 15.093 |

| 4-gram | 500 | 7576736 | KN | no-pr | 5.398 | 15.461 | 5.453 | 15.093 |

| 4-gram | 500 | 569016 | KN | pr | 5.695 | 15.461 | 5.75 | 15.093 |

| 4-gram | 1k | 2899330 | GT | no-pr | 5.822 | 10.013 | 5.859 | 9.806 |

| 4-gram | 1k | 326234 | GT | pr | 5.869 | 10.013 | 5.905 | 9.806 |

| 4-gram | 1k | 10204831 | KN | no-pr | 5.811 | 10.013 | 5.85 | 9.806 |

| 4-gram | 1k | 602069 | KN | pr | 6.048 | 10.013 | 6.096 | 9.806 |

| 4-gram | 5k | 3534140 | GT | no-pr | 6.492 | 3.117 | 6.521 | 2.917 |

| 4-gram | 5k | 425285 | GT | pr | 6.57 | 3.117 | 6.596 | 2.917 |

| 4-gram | 5k | 14591103 | KN | no-pr | 6.466 | 3.117 | 6.499 | 2.917 |

| 4-gram | 5k | 681505 | KN | pr | 6.681 | 3.117 | 6.723 | 2.917 |

| 4-gram | 10k | 3717947 | GT | no-pr | 6.673 | 1.663 | 6.691 | 1.526 |

| 4-gram | 10k | 448768 | GT | pr | 6.762 | 1.663 | 6.777 | 1.526 |

| 4-gram | 10k | 15603196 | KN | no-pr | 6.642 | 1.663 | 6.664 | 1.526 |

| 4-gram | 10k | 719912 | KN | pr | 6.864 | 1.663 | 6.897 | 1.526 |

| 4-gram | 20k | 3856702 | GT | no-pr | 6.793 | 0.81 | 6.801 | 0.748 |

| 4-gram | 20k | 464271 | GT | pr | 6.889 | 0.81 | 6.894 | 0.748 |

| 4-gram | 20k | 16181859 | KN | no-pr | 6.759 | 0.81 | 6.771 | 0.748 |

| 4-gram | 20k | 762636 | KN | pr | 6.987 | 0.81 | 7.01 | 0.748 |

| 4-gram | all | 4031432 | GT | no-pr | 6.913 | 0.271 | 6.913 | 0.254 |

| 4-gram | all | 512518 | GT | pr | 7.011 | 0.271 | 7.006 | 0.254 |

| 4-gram | all | 16621480 | KN | no-pr | 6.884 | 0.271 | 6.889 | 0.254 |

| 4-gram | all | 862439 | KN | pr | 7.109 | 0.271 | 7.124 | 0.254 |

Conclusions

Smoothing Alone

Modified Kneser-Ney beats Good-Turing across all n-gram orders and vocab sizes, confirming the results in <bib id='Chen1998empirical' />.

Smoothing and Pruning

To make the language model smaller, it is common to drop n-grams which occur less than k times. Alternatively one can drop ngrams which do not increase the entropy of the model by more than some threshold, as described in <bib id='Stolcke1998entropy-based' />.

Good-Turing smoothing with entropy pruning generally outperforms modified Knesser-Ney smoothing with entropy pruning. In the experiments above, this is true for 3- and 4-gram models, despite the GT models having many fewer N-grams. For pruned 2-gram models, the perplexity is about the same for GT and KN smoothing, but GT models have many fewer N-grams. This is consistent with the findings in <bib id='Siivola2007On' />.

Which model should we use?

Clearly, we want GT smoothing and pruning, or KN without pruning. Beyond this, we have to somehow estimate the LM effect on the overall WER rate, and make some guess at compute time as a function of ngram order, number of ngrams and vocab size. I don't know any good ways to make these estimates, but roughly:

- from <bib id='Goodman2001abit' />, we can estimate that a 1 bit improvement in LM yields a WER improvement of somewhere around 1.5% at starting WER of around 9% (Fig. 14 of <bib id='Goodman2001abit' />) and 4% WER improvement at starting WER in the range of 35% to 52%(Fig. 15 of <bib id='Goodman2001abit' />)

- We are guessing our starting WER will probably be somewhere around 40%-50%.

- The WER rate is at least the OOV rate, and probably higher because an OOV word hurts the recognition of the neighboring words, and may be the entire utterance.

- We don't know the effect of increased confusability due to the increased vocab size on the WER, so assume it doesn't exist.

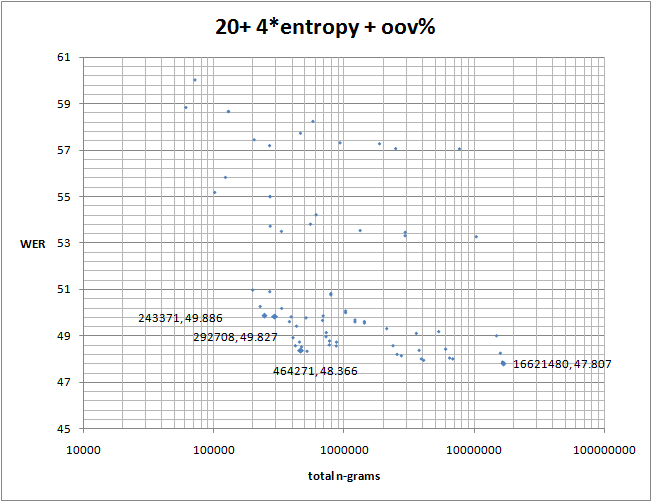

So a really simple model of WER as a function of entropy H is: <math>WER(H)=startingWER+OOVrate+4*H-offset</math>

Plotting it vs. the number of n-grams, we get

The rough horizontal stripes correspond to different vocab sizes, with the 500 word vocab (and corresponding 15% OOV) being at the top. If we include the confusability-of-large-vocab effect, the stripes would move up, with bottom stripes moving up more.

The relationship between the number of ngrams and the computational time (i.e. how likely we are to retain the correct hypothesis in the beam) is no easier, so we might as well consider options to the left of the knee in the curve (i.e. less than 500000 ngrams).

So, to me a decent choice looks like a GT, entropy pruned 2-gram or 3-gram model, with 5k or 10k vocab. Will it work? Who knows...

Do not read: Rantings, and leftovers

Differences between ngram-count and make-big-lm

There is some subtle difference between ngram-count and make-big-lm, which I cannot track down.

make-big-lm -name .big -order 3 -sort -read ./LM/counts/ngrams -kndiscount -lm try -unk -debug 1

and

ngram-count -order 3 -read LM/counts/ngrams -kn1 LM/counts/kn1-3.txt -kn2 LM/counts/kn2-3.txt -kn3 LM/counts/kn3-3.txt -kn4 LM/counts/kn4-3.txt -kn5 LM/counts/kn5-3.txt -kn6 LM/counts/kn6-3.txt -kn7 LM/counts/kn7-3.txt -kn8 LM/counts/kn8-3.txt -kn9 LM/counts/kn9-3.txt -kndiscount1 -kndiscount2 -lm try -interpolate -debug 1

give different results, even though the KN discounts are identical in both case above.

ngram-count seems to do better on vocab sizes < 20k, and make-big-lm is slightly better on the full vocab.

I suspect it has something to do with make-big-lm allocating .05 of total probability to the unk token for some reason, while ngram-count allocates almost nothing to it.

Comparing the following table against the models in #Model Quality section.

| n-gram order | vocab | ngram count | dev entropy | dev OOV % | test entropy | test OOV % |

|---|---|---|---|---|---|---|

| 2-gram | 5k | ngram 1=5000 ngram 2=580572 ngram 3=0 | 6.924 | 3.117 | 6.958 | 2.917 |

| 2-gram | 20k | ngram 1=20000 ngram 2=980894 ngram 3=0 | 7.200 | 0.810 | 7.217 | 0.748 |

| 2-gram | all | ngram 1=70957 ngram 2=1180725 ngram 3=0 | 7.286 | 0.271 | 7.297 | 0.254 |

| 3-gram | 5k | ngram 1=5000 ngram 2=776554 ngram 3=3234773 | 6.530 | 3.117 | 6.566 | 2.917 |

| 3-gram | 20k | ngram 1=20000 ngram 2=1176182 ngram 3=4038261 | 6.809 | 0.810 | 6.827 | 0.748 |

| 3-gram | all | ngram 1=70957 ngram 2=1342196 ngram 3=4201973 | 6.898 | 0.271 | 6.910 | 0.254 |

Caching, Clustering and all that

Not worth trying due to trigrams being fairly space efficient (See 11.2 All hope abandon, ye who enter here in <bib id='Goodman2001abit' />).

In particular, they have this to say about the best smoothing strategy they themselves have developed:

- "Kneser-Ney smoothing leads to improvements in theory, but in practice, most language models are built with high count cutoffs, to conserve space, and speed the search; with high count cutoffs, smoothing doesn’t matter."

References

<references/>

<bibprint />