Fisher Baseline Experiments

From SpeechWiki

(→Decoding and Scoring as NIST does it) |

|||

| (17 intermediate revisions not shown) | |||

| Line 38: | Line 38: | ||

! Config | ! Config | ||

! WER | ! WER | ||

| + | ! Entropy | ||

|- | |- | ||

| 1 | | 1 | ||

| Line 44: | Line 45: | ||

| [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/baseline/mpTest/PARAM/test.grid config 0] | | [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/baseline/mpTest/PARAM/test.grid config 0] | ||

| [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/baseline/mpTrigram/config9/test0/accuracy/out.nosil.trn.dtl 67.3%] | | [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/baseline/mpTrigram/config9/test0/accuracy/out.nosil.trn.dtl 67.3%] | ||

| + | | 7.146 | ||

|- | |- | ||

| 2 | | 2 | ||

| Line 50: | Line 52: | ||

| [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/baseline/PARAM/test.grid config 9] | | [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/baseline/PARAM/test.grid config 9] | ||

| [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/baseline/mpTrigram/config9/test0/accuracy/out.nosil.trn.dtl 67.2%] | | [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/baseline/mpTrigram/config9/test0/accuracy/out.nosil.trn.dtl 67.2%] | ||

| + | | 6.809 | ||

|- | |- | ||

| 3 | | 3 | ||

| Line 56: | Line 59: | ||

| [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/baseline/PARAM/test.grid config 10] | | [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/baseline/PARAM/test.grid config 10] | ||

| [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/baseline/mpTrigram/config10/test0/accuracy/out.nosil.trn.dtl 67.7%] | | [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/baseline/mpTrigram/config10/test0/accuracy/out.nosil.trn.dtl 67.7%] | ||

| + | | 6.618 | ||

|- | |- | ||

| 4 | | 4 | ||

| Line 62: | Line 66: | ||

| [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/baseline/PARAM/test.grid config 11] | | [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/baseline/PARAM/test.grid config 11] | ||

| [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/baseline/mpTrigram/config11/test0/accuracy/out.nosil.trn.dtl 69.5%] | | [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/baseline/mpTrigram/config11/test0/accuracy/out.nosil.trn.dtl 69.5%] | ||

| + | | 5.926 | ||

|- | |- | ||

| 5 | | 5 | ||

| Line 68: | Line 73: | ||

| [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/baseline/PARAM/test.grid config 12] | | [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/baseline/PARAM/test.grid config 12] | ||

| [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/baseline/mpTrigram/config12/test0/accuracy/out.nosil.trn.dtl 71.6%] | | [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/baseline/mpTrigram/config12/test0/accuracy/out.nosil.trn.dtl 71.6%] | ||

| + | | 5.496 | ||

|} | |} | ||

| Line 76: | Line 82: | ||

===Decoding and Scoring as NIST does it=== | ===Decoding and Scoring as NIST does it=== | ||

| + | |||

Tested on the first 500 utterances of the Dev Set, using the 10k vocab 2-gram LM. | Tested on the first 500 utterances of the Dev Set, using the 10k vocab 2-gram LM. | ||

| Line 119: | Line 126: | ||

| [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/baseline/mpTestc13/config13/test0/accuracy/out.nosil.trn.dtl 64.9%] | | [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/baseline/mpTestc13/config13/test0/accuracy/out.nosil.trn.dtl 64.9%] | ||

|} | |} | ||

| + | |||

| + | In the future, this [http://itl.nist.gov/iad/mig/tests/rt/2004-spring/devset/transcriptions/en20040402.glm transcription translation file] can be useful for Fisher too, although it will obviously be incomplete. | ||

= Single Pronunciation Dictionary Experiment = | = Single Pronunciation Dictionary Experiment = | ||

| Line 324: | Line 333: | ||

|- | |- | ||

| 1 | | 1 | ||

| - | | [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/triphone/ | + | | Baseline (row 8 in [[#WER as a function of number of components]]) |

| - | | [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/triphone/test/ | + | | [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/triphone/moreMixturesTpModel/config14 config 14] |

| - | | [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/triphone/ | + | | [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/triphone/test/config13/test0/accuracy/out.nosil.trn.dtl 53.4%] |

| + | | [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/triphone/test2kUtt/config13/test0/accuracy/out.nosil.trn.dtl 54.9%] | ||

|- | |- | ||

| 2 | | 2 | ||

| - | | [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/triphone/ | + | | Row 1 above, except 8 iterations to convergence, and vanishing any component with weight less than 1/512 |

| - | | [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/triphone/test/ | + | | [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/triphone/goodConvModel/config18AggressiveVanishing config 18] |

| - | | [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/triphone/ | + | | [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/triphone/test/config5AggresiveVanishing/test0/accuracy/out.nosil.trn.dtl 53.6%] |

| + | | [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/triphone/test2kUtt/config5AggresiveVanishing/test0/accuracy/out.nosil.trn.dtl 54.3%] | ||

|- | |- | ||

| 3 | | 3 | ||

| - | | | + | | Row 1 above, except 8 iterations to convergence, and vanishing any component with weight less than 1/512 only on the last split step |

| - | | [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/triphone/ | + | | [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/triphone/goodConvModel/config18 config 18] |

| - | | [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/triphone/test/ | + | | [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/triphone/test/config5_on_goodConvModelc18.log/test0/accuracy/out.nosil.trn.dtl 54.1%] |

| - | + | | [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/triphone/test2kUtt/config5/test0/accuracy 54.4%] | |

| - | + | ||

| - | + | ||

| - | | [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/triphone/ | + | |

| - | + | ||

|} | |} | ||

| + | |||

| + | The tests on 2000 utterances are more in line with what I expected than on 500 utterances, so let's just trust those. | ||

== cross-word left context== | == cross-word left context== | ||

| - | Does not slow things down too much and the results are below | + | Does not slow things down too much and the results are below. |

| - | + | ||

| + | Testing is done on row 3 of the table in [[#Single Pronunciation Dictionary Experiment]] with 2000 utterances. | ||

| + | |||

| + | Row 1 here is the same as row 2 in [[#More convergence with more aggressive vanishing steps]] above. | ||

{| class="wikitable sortable" | | {| class="wikitable sortable" | | ||

|+ cross-word vs. word-internal left context | |+ cross-word vs. word-internal left context | ||

| Line 355: | Line 366: | ||

|- | |- | ||

| 1 | | 1 | ||

| - | | [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/triphone/ | + | | [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/triphone/goodConvModel/config18AggressiveVanishing config 18] |

| - | | [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/triphone/ | + | | <del>[http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/triphone/test2kUtt/config5AggresiveVanishing/test0/accuracy/out.nosil.trn.dtl 54.3%]</del> [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/triphone/testTrueTrigram/config5/test0/accuracy/out.nosil.trn.dtl 53.0%] |

|- | |- | ||

| 2 | | 2 | ||

| - | | [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/triphone/ | + | | [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/triphone/goodConvModel/config16/PARAM/train.grid config 16] |

| - | | [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/triphone/ | + | | <del>[http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/triphone/test2kUtt/config3/test0/accuracy/out.nosil.trn.dtl 55.2%] </del> [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/triphone/testTrueTrigram/config3/test0/accuracy/out.nosil.trn.dtl 53.6%] |

|} | |} | ||

| + | |||

| + | The deleted result is from a buggy test, where a bigram was used even if a trigram was specified. left/right contexts depend on a trigram language model. | ||

| + | |||

== cross-word right context== | == cross-word right context== | ||

Does not get efficiently triangulated (yet - Jeff's looking at it) and slows training by about 30 times over the word-internal right context. We'll try it when we can speed it up some. | Does not get efficiently triangulated (yet - Jeff's looking at it) and slows training by about 30 times over the word-internal right context. We'll try it when we can speed it up some. | ||

| + | |||

| + | = Error-Driven unit selection = | ||

| + | == Deeper Decision trees == | ||

| + | The test here is to see whether my unit selection strategy beats simply increasing the number of mixtures (states) simply by deepening the DTs. | ||

| + | |||

| + | {| class="wikitable sortable" | | ||

| + | |+ WER vs. number of components per mixture | ||

| + | ! Row | ||

| + | ! Description | ||

| + | ! Total components | ||

| + | ! Mixtures | ||

| + | ! max comp per mixture | ||

| + | ! train config | ||

| + | ! WER 2000 utt | ||

| + | |- | ||

| + | | 1 | ||

| + | | baseline (same as row 2 in [[#More convergence with more aggressive vanishing steps]]) | ||

| + | | 63728 | ||

| + | | 503 | ||

| + | | 128 | ||

| + | | [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/triphone/goodConvModel/config18AggressiveVanishing config 18] | ||

| + | | [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/triphone/test2kUtt/config5AggresiveVanishing/test0/accuracy/out.nosil.trn.dtl 54.3%] | ||

| + | |- | ||

| + | | 2 | ||

| + | | row 1 above, but with more DT leaves | ||

| + | | 65900 | ||

| + | | 1033 | ||

| + | | 64 | ||

| + | | [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/triphone/moreDTsModel/config20 config 20] | ||

| + | | [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/triphone/moreDTsTest/config5/test0/accuracy/out.nosil.trn.dtl 52.3%] (with language model fixed, and perhaps other fixes: [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/triphone/test2kuttOnC20TheGreatBug/config5/logOnmoreDTsModel_config20.2009-4-21-14-9-8.txt 50.3%]) | ||

| + | |- | ||

| + | | 3 | ||

| + | | row 1 above, but with even more DT leaves | ||

| + | | 61475 | ||

| + | | 3845 | ||

| + | | 16 | ||

| + | | [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/triphone/moreDTsModel/config21 config 21] | ||

| + | | [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/triphone/moreDTsC21Test/config10/test0/accuracy/out.nosil.trn.dtl 53.7%] | ||

| + | |- | ||

| + | | 4 | ||

| + | | row 3 with fewer components | ||

| + | | 30742 | ||

| + | | 3845 | ||

| + | | 8 | ||

| + | | [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/triphone/moreDTsModel/config21 config 21] | ||

| + | | [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/triphone/moreDTsC21Test/config9/test0/accuracy/out.nosil.trn.dtl 56.5] | ||

| + | |- | ||

| + | | 5 | ||

| + | | row 3 with more components | ||

| + | | 245479 | ||

| + | | 3845 | ||

| + | | 64 | ||

| + | | [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/triphone/moreDTsModel/config21 config 21] | ||

| + | | [http://mickey.ifp.uiuc.edu/speech/akantor/fisher/exp/triphone/moreDTsC21Test/config5/test0/accuracyout.nosil.trn.dtl 49.3] | ||

| + | |- | ||

| + | | 6 | ||

| + | | learned units | ||

| + | | xx | ||

| + | | xx | ||

| + | | xx | ||

| + | | xx | ||

| + | | xx | ||

| + | |- | ||

| + | | 7 | ||

| + | | more learned units | ||

| + | | xx | ||

| + | | xx | ||

| + | | xx | ||

| + | | xx | ||

| + | | xx | ||

| + | |} | ||

| + | |||

| + | Also see [[SST:Units Paper]] | ||

[[Category:Fisher Experiments]] | [[Category:Fisher Experiments]] | ||

Latest revision as of 03:57, 30 July 2010

A set of traditional phone-based baselines against which to compare our mixed-unit systems. An implementation of it using GMTK and associated scripts is here.

Contents |

Multi-pronunciation Monophone Model

The initial model is monophone, with the number of states per phone specified in phone.state. With 64 gaussians per state.

The dictionary used allows multiple pronunciations (up to 6). See more details here.

Training on the entire fisher training set (1775831 utterances, specified in Fisher Corpus), takes an exceedingly long time: more than 15 hours for a single EM iteration, on a 32 cpu cluster or about 20 cluster compute days to get 64-gaussian mixtures, doubling the number of mixtures after converging with 6 iterations.

Possible solutions are:

- Do training on data where word boundaries are observed. (Thanks Chris) For this we need to force-align the entire fisher corpus using some existing recognizer.

- Use a smaller corpus.

- Use teragrid (our allocation of 30000 cpu-hours is only 40 days on our cluster - almost not worth the effort of porting)

- Fool around with better triangulations (not much hope for improvement since the RVs with each frame are densely connected in our DBN)

Training on word-aligned data

To speed up training up, I've used the above model to obtain word boundaries using force alignment. See forceAlign.pl script. The word boudaries, are in wordObservationsWordAligned.scp. Some of the utterance were impossible to word align to transcriptions. The transcription and scp files omitting those bad utterances are also generated.

The model with observed word boundaries could be trained about 6 times faster, and took about 3-4 days to train. The model from word-aligned training is in mpWAmodel dir.

Decoding and Scoring

Decoding is done by GMTKViterbiNew and scoring by sclite.

Preliminary Results

Tested on the first 500 utterances of the Dev Set, using the following language models. LM_SCALE and LM_PENALTY were not tuned. The language model and scoring treat all word fragments, filled pauses, and non-speech events as distinct words, and so the WER reported is conservative.

| Row | Vocab | Lang Model | Config | WER | Entropy |

|---|---|---|---|---|---|

| 1 | 10000 | bigram | config 0 | 67.3% | 7.146 |

| 2 | 10000 | trigram | config 9 | 67.2% | 6.809 |

| 3 | 5000 | trigram | config 10 | 67.7% | 6.618 |

| 4 | 1000 | trigram | config 11 | 69.5% | 5.926 |

| 5 | 500 | trigram | config 12 | 71.6% | 5.496 |

Testing the word-aligned model quality

Tested on the first 500 utterances of the Dev Set, using the 10k vocab 3-gram LM with no word fragments and no <unk>, mapping filled-pauses (uh, huh, etc.) and non-speech (e.g. [LAUGH]) to optionally deletable %hesitation, and allowing word fragments to match the prefix or suffix of hypothesized word (identical to row two in the next table with the bi-gram replaced with tri-gram and mpModel with mpWAModel). Using the model in mpWAModel yields 65.6% WER, about 0.9% absolute worse than not specifying word boundaries.

Decoding and Scoring as NIST does it

Tested on the first 500 utterances of the Dev Set, using the 10k vocab 2-gram LM. LM_SCALE and LM_PENALTY were not tuned (fixed at 10 -1 respectively).

| Row | LM | post-viterbi processing and sclite Scoring rules | Config | WER |

|---|---|---|---|---|

| 1 | Same as row 1 in prev section (for comparison purposes) | filled pauses and word fragments treated as distinct words , identical to score in prev table | config 0 | 67.3% |

| 2 | Same as in prev section, but vocab contains no word fragments (vocab filled in with more whole words to reach 10k), and no <unk> | word fragments match any word with common prefix/suffix, filled pauses and non-speech sounds mapped to %hesitation, and are optionally deletable | config 0 | 64.7% |

| 3 | Same as row 1 in prev section | word fragments deleted in post-viterbi processing, and made optionally deletable in reference transcription | config 17 | 66.6% |

| 4 | Same as row 1 in prev section | word fragments and <unk> deleted in post-viterbi processing (but unk is allowed in the language model), and made optionally deletable in reference transcription | config 17 | 64.9% |

| 5 | 20k vocab, 3-gram, GT smoothed, pruned, no word fragments, no <unk> | word fragments match common prefix/suffix, filled pauses and non-speech sounds mapped to %hesitation, and are optionally deletable | config 13 | 64.9% |

In the future, this transcription translation file can be useful for Fisher too, although it will obviously be incomplete.

Single Pronunciation Dictionary Experiment

This is the first experiment supporting my thesis.

A single pronunciation dictionary fisherPhoneticSpronDict.txt was generated from a multiple pronunciation dictionary fisherPhoneticMpronDict.txt by selecting the highest probability pronunciation, where the probabilities are learned with baum-welch in the mpModel.

In this we use the post-viterbi processing and sclite scoring that gave the best score in the previous section (row 2).

| Row | LM | Dictionary | Config | WER | compute time |

|---|---|---|---|---|---|

| 1 (identical to row 2 in previous table) | 10k vocab bigram, GT smoothed, pruned | Multi-pron | config 0 | 64.7% | 2:56 hours |

| 2 | 10k vocab bigram, GT smoothed, pruned | Single-pron | config 0 | 63.9% | 2:00 hours |

| 3 | 10k vocab trigram, GT smoothed, pruned | Single-pron | config 9 | 64.0% | 2:00 hours |

Single-pronunciation Triphone Model

I've also used the single pronunciation to train a triphone model. The triphone clustering questions can be found in genTriUnitParams.py script.

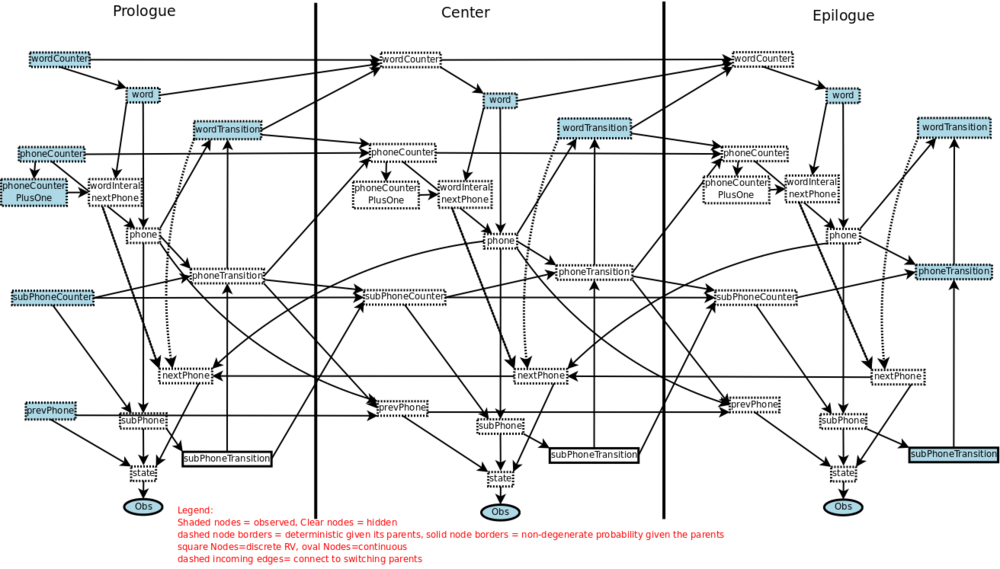

The structure of the graphical model is as follows:

You can also open it in dia format to show/hide the right context/left context layers.

WER as a function of number of components

Now that we have more RAM in our cluster, we can try using more components per mixture and see when the WER bottoms out. All the experiments are done using the word-internal triphones config 14 (identical to config 5 except for iterations and num of gaussians), and tested on the 10k vocab trigram (row 3 in prev table).

| Row | Num Components | Descr | WER |

|---|---|---|---|

| 1 | 1 | 75.9% | |

| 2 | 2 | 69.6% | |

| 3 | 4 | 65.9% | |

| 4 | 8 | 63.5% | |

| 5 | 16 | 60.5% | |

| 6 | 32 | 57.3% | |

| 7 | 64 | 55.1% | |

| 8 | 128 | 53.4% | |

| 9 | 256 (127971 total components) | 51.9% | |

| 10 | (127971 total components, simply an extra iteration) | Row 9 after MCVR=1e20, 1 iteration | 51.6% |

| 11 | (127924 total components) | Row 9 after MCVR=50, 1 iteration | 51.6% |

| 12 | (127761 total components) | Row 9 after MCVR=10, 1 iteration | 51.6% |

| 13 | (120321 total components) | Row 9 after MCVR=2, 1 iteration | 51.5% |

| 14 | (116519 total components) | Row 9 after MCVR=1.8, 1 iteration | 52.1% |

| 15 | (57462 total components) | Row 9 after MCVR=1, 1 iteration | 57.5% |

The above suggests that we can set MCVR to some reasonably aggressive value e.g. 2 without any ill effects, and may be some benefit. GMTK doc (sec 5.3.1) suggests disabling vanishing for fewer than about 32 gaussians and to set MCVR to 10 for 32 or more.

WER as a function of number of iterations to convergence

Rows 9 and 10 suggest that 6 iterations is not enough to converging the model at least at 256 gaussians. So we test at various iterations and gaussians, and hope that there is some trend that will tell us where more convergence is needed. All the tests were done on config 14 which should be identical to config 5.

| Gaussians per mixture\Iter | 0 | 1 | 2 | 3 | 4 | 5 |

|---|---|---|---|---|---|---|

| 1 | 75.3% | 75.8% | 75.7% | 75.8% | 75.9% | 75.6% |

| 4 | 69.2% | 69.1% | 68.4% | 67.2% | 66.1% | 65.9% |

| 16 | 63.2% | 62.8% | 62.2% | 61.4% | 60.6% | 60.5% |

| 64 | 57.3% | 57.5% | 56.8% | 55.8% | 55.8% | 55.1% |

Seems like it could use a few more convergence steps.

More convergence with more aggressive vanishing steps

This is where stuff gets weird. I've increased the number iterations per split to 8, and used more aggressive vanishing on splits. At first I wanted to vanish all components whose probability falls below some ( more-or-less) constant=1/512 which seemed to give me such a nice improvement on 256 gaussians see #WER as a function of number of components.

This worked a bit worse than config 14 above for 128 mixtures: 53.6% WER instead of 53.4% WER (row 8 in #WER as a function of number of components).

Trying it again with no vanishing until the last step made things a LOT worse: 54.1% WER.

This should not be, so I tried using a larger test set: 2000 utterances instead of 500.

| Row | Description | train config | WER 500 utt | WER 2000 utt |

|---|---|---|---|---|

| 1 | Baseline (row 8 in #WER as a function of number of components) | config 14 | 53.4% | 54.9% |

| 2 | Row 1 above, except 8 iterations to convergence, and vanishing any component with weight less than 1/512 | config 18 | 53.6% | 54.3% |

| 3 | Row 1 above, except 8 iterations to convergence, and vanishing any component with weight less than 1/512 only on the last split step | config 18 | 54.1% | 54.4% |

The tests on 2000 utterances are more in line with what I expected than on 500 utterances, so let's just trust those.

cross-word left context

Does not slow things down too much and the results are below.

Testing is done on row 3 of the table in #Single Pronunciation Dictionary Experiment with 2000 utterances.

Row 1 here is the same as row 2 in #More convergence with more aggressive vanishing steps above.

| Row | Train config | WER |

|---|---|---|

| 1 | config 18 | |

| 2 | config 16 | |

The deleted result is from a buggy test, where a bigram was used even if a trigram was specified. left/right contexts depend on a trigram language model.

cross-word right context

Does not get efficiently triangulated (yet - Jeff's looking at it) and slows training by about 30 times over the word-internal right context. We'll try it when we can speed it up some.

Error-Driven unit selection

Deeper Decision trees

The test here is to see whether my unit selection strategy beats simply increasing the number of mixtures (states) simply by deepening the DTs.

| Row | Description | Total components | Mixtures | max comp per mixture | train config | WER 2000 utt |

|---|---|---|---|---|---|---|

| 1 | baseline (same as row 2 in #More convergence with more aggressive vanishing steps) | 63728 | 503 | 128 | config 18 | 54.3% |

| 2 | row 1 above, but with more DT leaves | 65900 | 1033 | 64 | config 20 | 52.3% (with language model fixed, and perhaps other fixes: 50.3%) |

| 3 | row 1 above, but with even more DT leaves | 61475 | 3845 | 16 | config 21 | 53.7% |

| 4 | row 3 with fewer components | 30742 | 3845 | 8 | config 21 | 56.5 |

| 5 | row 3 with more components | 245479 | 3845 | 64 | config 21 | 49.3 |

| 6 | learned units | xx | xx | xx | xx | xx |

| 7 | more learned units | xx | xx | xx | xx | xx |

Also see SST:Units Paper